"Was the Ford Pinto, with all its imperfections revealed in crash tests, not designed?"

This statement goes against the whole design argument; Is God a poor engineer who didn't heed Murphy's Law?

As ridiculous as that analogy is, Karmen at Chaotic Utopia glommed on to a doozy that all the other science bloggers had missed.

The article called evolution a "simple" process. In our experience, does a "simple" process generate the type of vast complexity found throughout biology?

I can see how this must've really irked Karmen since one of her regular features is Friday Fractals. You see, fractals are complex patterns generated from simple algorithms.

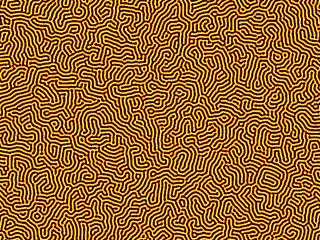

I'm afraid my fractals aren't quite as good as Karmen's since I made mine with the free software GIMP. The point remains that a fractal is a perfect example of a "complex design" that's generated by a few simple instructions.

The fun continues. Mark Chu-Carroll of Good Math, Bad Math expatiated upon the theme by bringing cellular automata (CA) into the mix.

There you have two examples of "complex designs" spawned by "simple processes." Before I bring up a third, I should mention that MarkCC made a point that the above CA is turing complete. Nice segue since the next image will be a Turing Pattern. This "design" is so named because it derives from the principles layed out in the great mathematician Alan Turing's 1952 paper The Chemical Basis of Morphogenesis. In it, Turing demonstrates how "complex" natural patterns such as a leopard's stripes (or any embryological development) can be generated from simple chemical interactions. This ScienceDaily article describes it thus:For the simplest example of this, line up a bunch of little tiny machines in a row. Each machine has an LED on top. The LED can be either on, or off. Once every second, all of the CAs simultaneously look at their neighbors to the left and to the right, and decide whether to turn their LED on or off based on whether their neighbors lights are on or off. Here's a table describing one possible set of rules for the decision about whether to turn the LED on or off.

Current State Left Neighbor Right Neighbor New State On On On Off On On Off On On Off On On On Off Off On Off On On On Off On Off Off Off Off On On Off Off Off Off

Based on purely theoretical considerations, Turing proposed a reaction and diffusion mechanism between two chemical substances. Using mathematics, he proved that such a simple system could produce a multitude of patterns. If one substance, the activator, produces itself and an inhibitor, while the inhibitor breaks down or inhibits the activator, a spontaneous distribution pattern of substances in the form of stripes and patches can be created. An essential requirement for this is that the inhibitor can be distributed faster through diffusion than the activator, thereby stabilizing the irregular distribution. This kind of dynamic could determine the arrangement of periodic body structures and the pattern of fur markings.

I generated the following image using the Turing Pattern plug-in for GIMP.

The kicker is that the above mentioned ScienceDaily article is entitled Control Mechanism For Biological Pattern Formation Decoded and it's about how biologists and mathematicians in Freiburg—hence the 'German flag' color scheme on my Turing Pattern—have found an example in nature of just what Turing predicted.

Biologists from the Max Planck Institute of Immunobiology in Freiburg, in collaboration with theoretical physicists and mathematicians at the University of Freiburg, have for the first time supplied experimental proof of the Turing hypothesis of pattern formation. They succeeded in identifying substances which determine the distribution of hair follicles in mice. Taking a system biological approach, which linked experimental results with mathematical models and computer simulations, they were able to show that proteins in the WNT and DKK family play a crucial role in controlling the spatial arrangement of hair follicles and satisfy the theoretical requirements of the Turing hypothesis of pattern formation. In accordance with the predictions of the mathematical model, the density and arrangement of the hair follicles change with increased or reduced expression of the WNT and DKK proteins.

There you go, Mr. Luskin: an example from natural biology of a simple process generating vast complexity. To your Woo, I say Schwiiing!

,where

,where